A team from a leading London facility has used an augmented reality (AR) headset to examine CT images alongside an endoscopic video of patient anatomy while simulating minimally invasive surgery. The technology led to improved operating times and surgical proficiency.

First author Hasaneen Al Janabi, from King's College London, and colleagues explored the feasibility of using AR as an alternative to conventional image guidance for ureteroscopy, a common procedure for addressing urinary stones.

For minimally invasive surgeries such as ureteroscopy, operating clinicians generally examine a live endoscopic video of the patient for guidance during the procedure. A downside with this technique is that it requires clinicians to switch their gaze between the surgical site and a computer monitor displaying the endoscopic view. This disruption to the visual-motor axis during surgery has been associated with a variety of problems, from restricting surgical performance to increasing the risk of injuries, the authors noted in an article published online on 18 June in Surgical Endoscopy.

In the current study, Al Janabi and colleagues tested the effectiveness of using augmented reality to address the limitations of standard ureteroscopy. They specifically evaluated the capacity of an AR headset (HoloLens, Microsoft) to facilitate ureteroscopy simulations for 72 participants of varying expertise -- including medical students (novice), urological residents or trainees (intermediate), and endourology specialists (expert).

The researchers tracked the participants' operating times, and an expert endourologist scored the participants' performance on a 35-point rating scale based on the Objective Structured Assessment of Technical Skills (OSATS).

Overall, they found that using AR led to improvements in the participants' technical proficiency as well as in the amount of time it took them to complete the procedure simulation. On average, the AR technique was associated with a decrease in operating time of 73 seconds and an increase in proficiency score of 4.1 points, compared with the conventional method.

| AR vs. standard method for image-guided minimally invasive surgery | ||

| Conventional monitor | AR headset | |

| Operating time | 5 minutes, 31 seconds | 4 minutes, 18 seconds |

| Rating of surgical proficiency (OSATS score) | 17.3 | 21.4 |

Furthermore, the participants completed follow-up questionnaires regarding their experience; the vast majority claimed that the ability to see CT scans with AR technology during the procedure was a useful feature. In addition, 95% of the participants agreed or strongly agreed that AR will not only have a role within surgical practice but also be feasible for clinical application. Roughly 97% of the participants also affirmed that they agreed or strongly agreed that AR will have a role in surgical education.

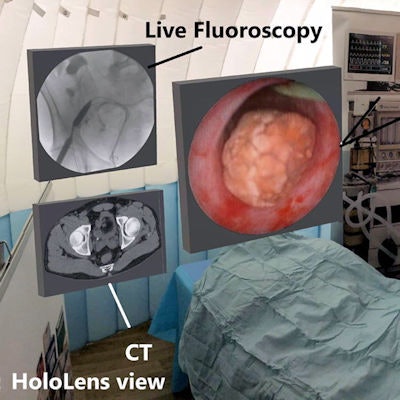

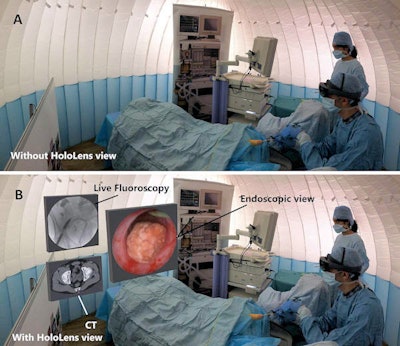

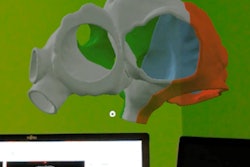

AR technology enables clinicians to look at CT scans alongside a real-time endoscopic view of patient anatomy during minimally invasive procedure simulations. Image courtesy of Al Janabi et al. Licensed under CC BY 4.0

AR technology enables clinicians to look at CT scans alongside a real-time endoscopic view of patient anatomy during minimally invasive procedure simulations. Image courtesy of Al Janabi et al. Licensed under CC BY 4.0"The [AR] device facilitated improved outcomes of performance and was widely accepted as a surgical visual aid by the study participants," the authors wrote. "HoloLens represents a feasible alternative to conventional endoscopic monitors, possibly by aligning the surgeon's visual-motor axis. The device is operated using gestures, and thus sterility is not compromised and can support safe practice."

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnieeurope.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)