A review of commercially-available lung nodule analysis AI software in Europe has found widespread capabilities for detection and measurement of standard nodules, but also a lack of high-level clinical evidence and support for tasks such as endobronchial or cystic lesions.

The analysis, published 7 May in European Radiology, showed that while many of the CE-marked applications included in the study could support CT-based lung cancer screening (LCS) programs, caution and post-implementation monitoring is needed.

“Limited high-level clinical evidence complicates integrating AI into guidelines, securing reimbursement, and formulating recommendations for its use in lung cancer screening programs,” wrote corresponding author Noa Antonissen, of Radboud University Medical Center in the Netherlands, and colleagues.

The researchers derived six core LCS tasks (nodule detection, classification, measurement, growth assessment, malignancy risk estimation, and structured management) from four nodule management recommendations: Lung-RADS 2022, British Thoracic Society (BTS) guidelines, European Union Position Statement (EUPS), and European Society of Thoracic Imaging (ESTI).

The research encompassed 16 products from 16 different vendors. Questionnaires to confirm product capabilities were completed by 10 vendors and public documentation was utilized to provide data for software from non-responding companies. The authors found the following:

- Detection and measurement of solid and subsolid nodule: 14 products

- Growth assessment: 12 products

- Malignancy risk estimation: nine products (PanCan in five, AI-based scores in four)

- Support for endobronchial or cystic lesions: zero products

In other findings, the researchers noted high task coverage (defined as more than 75%) in 10 products for the EUPS and in four products for BTS guidelines. However, they found that no software achieved high coverage for Lung-RADS or ESTI guidelines.

After identifying a total of 60 peer-reviewed studies for use of these types of software applications, the researchers found that 42 (70%) assessed diagnostic accuracy efficacy, 15 (25%) included diagnostic thinking efficacy, and 13 (21.7%) covered technical or potential clinical efficacy.

Furthermore, therapeutic efficacy was evaluated in only one study (1.7%) and no studies assessed patient outcome efficacy or social efficacy.

“Future research should prioritize prospective post-deployment studies in real screening programs that report clinical and program outcomes, workflow impact, generalizability, equity, and governance,” the authors wrote. “As lung cancer screening programs expand, generating and publishing such real-world evidence will be crucial for responsible AI adoption at scale.”

Other members of the research team included Steven Schalekamp and Dr. Colin Jacobs of Radboudumc, Prof. Dr.-Ing Horst Hahn of the University of Bremen and Fraunhofer MEVIS in Bremen, Germany, and Kicky van Leeuwen, PhD, of Romion Health and Health AI Register in Utrecht, the Netherlands.

The full study can be found here.

In a LinkedIn post about the research, Van Leeuwen noted that Germany has just begun rolling out nationwide lung cancer screening and that AI is no longer optional; computer-assisted detection and volumetry are mandatory. As a result, every participating center in Germany now has to choose an AI application.

However, there is limited guidance on what level of evidence is sufficient, how to perform local performance validation, and how to organize ongoing quality assurance, she said.

“In my opinion, this creates both risk and inefficiency, with each hospital effectively having to build its own evaluation, procurement, and governance approach,” she said.

Meanwhile, in the Netherlands, preparations are underway to implement AI in the breast cancer screening program using a different model -- centralized, rigorous protocols for validation prior to procurement, and structured post-deployment quality control, van Leeuwen said. The trade-off is a longer path to large-scale implementation.

“Two countries, two screening programs, both adopting AI, but with very different approaches to implementation and quality assurance,” she said.

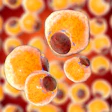

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnieeurope.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)