Dr. Benoît Rizk operates at the intersection of private practice, R&D leadership, and EU-funded research. As a practicing radiologist and head of R&D at the 3R Swiss Imaging Network, his approach is defined by operational necessity rather than theoretical research.

Not a buyer, but a builder

While most foundational AI research in radiology remains concentrated in public academic centers, 3R functions as a real-world innovation hub. It is also the only private partner in a European digital twin consortium led by Technical University of Munich, positioning the network not as a passive buyer of AI tools, but as a co-developer, validator, and early-stage testing ground.

The five-year dataset mentioned earlier, presented by Prof. Sergey Morozov at RSNA 2025, covers nearly 400,000 AI-processed imaging studies across 20 centers. It shows adoption by 91% of radiologists and turnaround time reductions of up to 33% in trauma radiography. But the numbers are only the surface. What makes this dataset unusual is that it reflects not pilot projects, but operational reality.

Governance comes first

Dr. Benoît Rizk, Chief Medical Innovation Officer at 3R, stresses the operational nuances of AI governance.Image courtesy of Claudia Tschabuschnig

Dr. Benoît Rizk, Chief Medical Innovation Officer at 3R, stresses the operational nuances of AI governance.Image courtesy of Claudia Tschabuschnig

Rizk’s starting point: governance. You need a defined strategy, a leadership structure, continuous communication, and a trained clinical team. You also need partners, platforms that provide validated tools, because running regulatory validation internally is not feasible at scale. Infrastructure follows: low-latency delivery, local compute capacity, and close coordination with IT. With cyberattacks rising in healthcare, AI deployment is inseparable from security architecture.

What sets the 3R model apart, however, is what happens after deployment, the part most institutions underestimate. The process is structured and deliberately sequential: clinical need identification, market evaluation, user preference assessment, shadow mode testing, single-site pilot, then controlled rollout.

Crucially, this pipeline includes something most hospitals lack: an exit strategy. Their plug-and-unplug model means tools are continuously evaluated and actively removed when performance drops or fails to deliver value. This reframes AI from a sunk investment into a modular system. Removing a tool is not failure, it is governance in action.

Performance is local

The same mindset extends to validation, because performance is local. A model trained on tuberculosis data from African populations may not correlate with Norwegian patients. Imaging equipment, acquisition protocols, and workflow differences all affect outputs. Vendor benchmarks rarely reflect this complexity. “You test it yourself,” he says. “If it works, you plug it, and you monitor it.”

Another layer of complexity emerges when AI disagrees with the radiologist. These cases are not edge scenarios; it's daily reality. 3R is actively building structured workflows for handling disagreement, because responsibility becomes ambiguous when both human and system can be wrong. At the same time, Rizk warns against a growing behavioral risk: overreliance.

He recalls a case where a report justification read, “the AI said so.” That, he says, is precisely what must be avoided. AI will reshape radiology one task at a time, but clinical authority must remain human. Competence is not optional, it becomes more important.

Integration decides everything

Adoption, meanwhile, is uneven. Radiologists do not want blanket automation, they want targeted relief. In areas like DEXA-based osteoporosis assessment, demand for full automation is high because tasks are repetitive and low in cognitive value. In others, resistance remains strong. Automation is accepted where it removes friction, not where it replaces expertise.

Efficiency gains depend less on the algorithm than on integration. Fully embedded AI in structured reporting workflows saves time. Partial integration, requiring extra clicks or separate PACS series, can slow radiologists down. Even small inefficiencies accumulate. As Rizk puts it, a single additional step can act “like a grain of sand in a functional machine.”

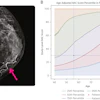

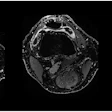

Data from the 3R knee MRI study reflects this: fully integrated AI reduced reporting time, while partial integration showed no benefit or even negative impact.

To manage this complexity, the network tracks not just technical performance but user acceptance, using Net Promoter Scores. The variation is striking: some tools achieve extremely high approval, others strongly negative ratings. This spread reflects a simple reality -- AI maturity is uneven, and not all tools deserve to stay.

Why strong tools fail in practice

External validation reinforces this point. Large-scale datasets such as those analyzed by Sergey Morozov show how performance shifts across populations and settings, underlining the need for continuous real-world evaluation rather than reliance on static benchmarks.

The generative AI wave introduces a different kind of risk, not hallucination, but alignment. Systems that confirm user assumptions rather than challenge them can create false confidence. A radiologist asking for confirmation may receive agreement, not verification. In parallel, cybersecurity risks increase as more tools connect to clinical systems, expanding the attack surface, especially when third-party integrations are involved.

Vendors, Rizk argues, often fail in two areas: lack of clinical understanding and slow operational response. At 3R, AI implementation is treated as a partnership, not a transaction, with regular operational meetings and long-term strategy alignment. Without this, even strong tools fail in practice.

And then there is workforce impact. Fully automated pipelines for CT and MRI are already on vendor roadmaps. Parts of the profession will change significantly within five to ten years, Rizk says. The response should not be denial, but preparation: skill adaptation, financial planning, and strategic positioning.

Digital twins to predict treatment outcomes

Looking ahead, his team is already working on two next-stage developments. The first is AI-assisted quality control, locally deployed language models that review reports against clinical guidelines in real time.

“AI will watch me,” he said. “And I will watch AI with AI.”

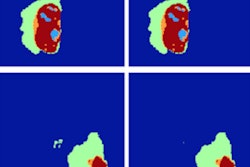

The second is digital twins: patient-specific computational models integrating imaging, radiomics, and clinical data to predict treatment outcomes. Many of the relevant patterns are invisible to the human eye. That, he says, is exactly why the technology matters.

Switzerland provides a uniquely enabling environment for this kind of work. It combines strong academic institutions such as ETH Zurich and EPFL with a highly developed private imaging sector, significant R&D investment, and a regulatory approach that favors sector-specific governance over a single overarching AI law.

Initiatives like the Swiss Personalized Health Network and BioMedIT support secure data infrastructure, while major industry players such as Roche and Novartis invest heavily in data-driven healthcare. The result is a system with enough structure to ensure safety, and enough flexibility to allow real-world experimentation, making it an ideal environment for translating AI from research into clinical practice.