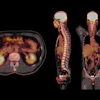

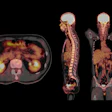

Researchers from Germany used augmented reality (AR) technology to overlay CT scans onto patients with spinal lesions -- lowering the radiation dose required for CT-guided surgery while maintaining high navigational accuracy, according to an article published online on 5 April in the European Spine Journal.

The group, led by senior author Dr. Christopher Nimsky, chair of neurosurgery at the University of Marburg, developed a method for CT-guided spine surgery that involved combining AR technology -- including an operating microscope and anatomical mapping software -- with a mobile CT scanner.

For the proof-of-concept study, Nimsky and colleagues acquired the CT scans of 10 patients who underwent surgery for intradural and extradural spinal lesions between June and August 2018. They used automatic image segmentation software to delineate the contours of the vertebrae, tumors, and spinal implants, and then converted the segmented images into 3D AR models.

Next, they input these 3D models into the operating microscope, which allowed the surgeons to see the 3D models superimposed onto the corresponding patient anatomy in real-time. The surgeons were also able to examine the 3D models on a separate screen that displayed the intraoperative CT scans of the patient during surgery.

After successful completion of the surgeries, the researchers found that their technique offered high navigational accuracy, with an average target registration error of approximately 1 mm. The AR technique additionally enabled the surgeons to confirm navigational precision throughout the operation through the assistance of a warning feature that informed them when any shifts in registration occurred.

Incorporating AR navigation registration into the spine surgery workflow only added five minutes to the total procedure time. It also allowed the surgical team to rely less on intraoperative CT guidance, lowering the overall CT radiation exposure by roughly 70%. The mean radiation exposure for the study was between 0.35 mSv and 0.98 mSv in the cervical spine, 2.16 mSv and 6.92 mSv in the thoracic spine, and 3.55 mSv and 4.2 mSv in the lumbar spine, compared with an estimated 10.3 mSv based on reports in the current literature.

The surgeons primarily used AR to facilitate the identification of tumor borders in this study, but they suggested that physicians could also use the technology to pinpoint vascular spinal lesions, guide pedicle screw implantation, and aid bone resection, among other tasks.

"AR greatly supports the surgeon in understanding the 3D anatomy, thereby facilitating surgery," the authors wrote. "Using the implemented technique and workflow offers a wide variety of potential applications for AR support in spinal surgery."

AR technology offers "new avenues" for facilitating surgical procedures in the spine, Nimsky and colleagues added. Though they were not able to quantify the immediate benefits that the patients may have received, the researchers emphasized the consistently high reliability of the technique in their small cohort.

"Without doubt, this technology will find its way into routine clinical applications, while optimal solutions need to be further developed. ... It is therefore likely that not only the AR devices have to be adapted to the surgical procedure, but also the surgical procedure itself will be changed by AR resulting in more minimally invasive procedures," they concluded.

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnieeurope.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)