Dear AuntMinnieEurope Member,

Large studies involving the use of artificial intelligence (AI) are in short supply, so a U.K. study published this week is bound to generate considerable attention, particularly because it involved half a million patients seen at a top London hospital over 10 years.

The research shows computer vision algorithms can greatly reduce delays in identifying and acting on abnormal chest x-rays, noted the authors, who think their findings may be relevant for MRI and CT too. Go to our news report in the Imaging Informatics Community.

Dr. Paul Dubbins, alias the grumpy old man in Vienna, has no plans to report chest x-rays. He's amazed how retired colleagues suddenly find the time to report vast numbers of chest x-rays -- provided the fee is generous. He also intends to avoid golf, gardening, and cruise holidays now that he's left full-time work. Don't miss his entertaining column.

Radiation exposure to the eyes is associated with an increased risk of cataracts. Eye lens shields can minimize exposure to radiation by up to 64% during head CT exams, but do they cause significant artifacts? A German group has addressed this question in the CT Community.

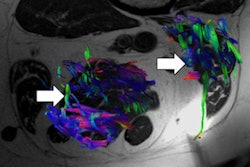

Sarcopenia, the loss of muscle mass, is important in older people because it's a strong predictor of mortality and may have a prognostic role in cancer patients. Spanish radiologists won a cum laude award at RSNA 2018 for showing how advanced MRI sequences and associated parameters are making rapid progress here. Visit our MRI Community.

Checklists are important to promote safety and teamwork, says Dr. Caroline Rubin, vice president of the U.K. Royal College of Radiologists (RCR). The RCR has just released practical and timely guidelines on this topic, and you can download them free of charge.

A new study has highlighted the potential of using automatic classification based on a single contrast of MRI to differentiate glioblastoma from brain metastasis. The researchers trained a machine-learning algorithm to analyze conventional, postcontrast T1-weighted MR examinations.

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnieeurope.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)