VIENNA - With 142 of 603 sessions at ECR 2026 carrying an AI tag (23.5%) according to an overview by Yaozhi Wang for RadAI Slice, the conversation has moved on. No one is debating whether AI belongs in radiology anymore. The harder questions are about what happens when it fails and why.

Diversity isn’t optional

For Dr. Martin Willemink, PhD, the answer begins long before an algorithm is built, in the data it learns from. The radiologist and co-founder of medical imaging data company Segmed opened with a premise that sounds obvious but is often overlooked: Data quality isn’t just image quality. It’s diversity.

Dr. Martin Willemink, PhD.All pictures courtesy of Claudia Tschabuschnig

Dr. Martin Willemink, PhD.All pictures courtesy of Claudia Tschabuschnig

“If you train only on the best images, your model will only work in the best populations,” he said. “You need diversity in artifacts, resolution, noise, vendors, geography, age, sex, race.”

The mechanics are straightforward. Train a model only on CT scanners from one vendor and it may fail on another. Public datasets can help early research but are often mislabeled. Commercial datasets exist but are expensive. Synthetic data may fill gaps while potentially introducing new biases.

The risks aren't abstract. The need is real. As recently as 2020, more than 70% of AI models submitted for U.S. Food and Drug Administration (FDA) approval were trained on data from just three U.S. states: California, Massachusetts, and New York.

“Will it work in the deep south? In Europe? Probably not,” Willemink noted. The keyword, repeated throughout the talk, was diversity.

“Proactively look at your data,” Willemink said. “Make sure underrepresented populations are present.”

Hidden signals add another layer of risk. AI models can learn shortcuts, sometimes associating hospital logos, scanner artifacts, or other unintended markers with disease. The output may look right. The reasoning is not.

Seeing through knowing

At the heart of radiology, a radiologist once told Dr. Mohammad Hossein Mehrizi, PhD, are "doctors with golden minds and golden eyes." Mehrizi, an organizational researcher at Leiden University Medical Center studying how experts interact with technology, wanted to understand what happens to those golden eyes when AI enters the workflow.

Dr. Mohammad Hossein Mehrizi, PhD.

Dr. Mohammad Hossein Mehrizi, PhD.

Drawing on the concept of the medical gaze, he described how physicians do not simply see images, they see them through what they already know. Borrowing from philosopher Michel Foucault, he argued that this cognitive structure shapes attention and can create blind spots.

“We see through knowing,” Mehrizi explained. “Try to look at something without naming it in your head. It’s very difficult.”

That cognitive structure shapes attention and can create blind spots.

“The most dangerous situation,” Mehrizi said, “is when both human and AI look at the same thing, and drift together.”

Mehrizi described three types of attention in radiology: Focal attention: focused on a specific abnormality, lateral attention: scanning the broader image for unexpected findings and strategic attention: monitoring how attention is distributed. AI interfaces, he argued, often push users toward focal attention, sometimes at the expense of peripheral awareness.

In eye-tracking studies, radiologists interacting with AI showed different behavior patterns: looking at AI suggestions, looking through them, looking beyond them, or even actively looking against them. The most dangerous pattern wasn't overreliance or dismissal.

“The most dangerous situation,” Mehrizi said, “is when both human and AI look at the same thing, and drift together.”

He also challenged a common promise of AI: removing routine tasks.

“Routine tasks flex your attention muscles,” he said. “If you remove all of them, you also remove the pauses that help reset attention.”

The bias loop

Dr. Paul Yi, a pediatric radiologist at St. Jude Children’s Research Hospital, brought the discussion back to data and ethics.

Dr. Paul Yi.

Dr. Paul Yi.

“AI can democratize expertise,” he said. “But if the data has bias, you scale that bias at unprecedented levels.”

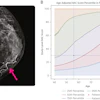

Yi’s research on bone-age prediction algorithms revealed systematic biases across demographic groups, including differences affecting girls and children at different age ranges.

Even more concerning, AI models can learn shortcuts. Algorithms trained to detect pneumothorax sometimes identify chest tubes, the treatment, not the disease, instead of the condition itself. Fracture detection models may rely on laterality markers rather than bone anatomy. The diagnosis may still appear correct, but the reasoning behind it is flawed.

Radiologists themselves also bring bias shaped by training, geography, and subspecialty experience.

“If reports don’t mention a finding, the AI will never learn it,” Yi noted. Biased data trains biased models, which then produce the next round of biased data.

The patient at the end of the pipeline

The discussion eventually turned to patients. If AI tools are deployed globally, will they perform equally across healthcare systems? Many models are validated primarily in the U.S. or Western Europe. But imaging infrastructure, disease prevalence, and patient populations vary widely across the world.

Mehrizi also raised concerns about patients increasingly turning to AI tools directly. Desperate for answers, some may upload scans into online systems or chatbots without clinical guidance.

“That can create a fake sense of knowing,” he warned.

Yi shared a simple example. A family member once read a radiology report mentioning a fracture and assumed it meant the bone was not broken. Medical language can be confusing even for well-educated patients. AI-generated explanations without clinical context could make that worse.

“You have to be careful,” Yi said. “Very careful.”

The real test

The session ended without simple answers. Mehrizi emphasized that attention in radiology is shaped not only by technology but also by the broader environment: workflow design, time pressure, social context, and even the fear of litigation.

Yi advocated for “forcing functions”, safety mechanisms that warn users when AI outputs cannot be trusted. Willemink returned to the foundation of the discussion: data diversity.

“It’s the keyword,” he said. “Include as many hospitals, vendors, and populations as possible. That’s the only way to build something that works for everyone.”

AI is already embedded in radiology practice. But the field is moving beyond the excitement of early benchmarks. The harder questions now involve integration, accountability, and real-world reliability. The challenge is no longer just whether AI can detect the fracture. It is whether the combined human-AI system still sees the patient.

Our full coverage of ECR 2026 can be found here.