Augmented reality (AR) and 3D printing provide clinicians with new alternatives for image-guided spine surgery that involve less radiation exposure and improve the accuracy of implanting pedicle screws, according to two recent studies from separate teams of Swiss and Italian researchers.

In the first study, Philipp Fürnstahl, PhD, and colleagues from the University of Zurich developed a technique using an augmented reality headset to visualize the anatomy of patients with spinal deformities, as well as the ideal insertion route of screw implants, while performing surgery. Their technique proved to be very precise when simulating spine surgery on lumbar phantoms, compared with conventional fluoroscopy guidance.

"Various navigation approaches have been proposed for spinal surgery due to the high demands placed on precision and safety. ... We presented a radiation-free navigation approach for spinal fusion surgery comprising surface-based registration and intuitive holographic navigation," the authors wrote.

Radiation-free navigation

Patients with spinal fractures or serious spine disorders such as scoliosis often need to undergo spinal fusion surgery, the success of which relies heavily on the precise insertion of pedicle screws into the vertebrae. The traditional method of using bony landmarks to guide freehand screw placement has bothered clinicians for its unreliability, with a mean accuracy as low as 68%, the researchers noted. Surgeons have found more success using conventional fluoroscopy for surgical navigation, but the potential risk of excessive radiation exposure with this method has raised concerns.

Several groups have recently begun exploring new ways to guide spine surgery that are more accurate and involve less radiation exposure than standard methods, without substantially increasing costs and operation times, the researchers added.

For the present study, Fürnstahl and colleagues turned to augmented reality technology as a possible candidate for guiding screw insertion. They developed a technique that consists of placing markers on a target, creating virtual 3D models based on CT scans of the spine, and then registering these 3D models to the target anatomy (International Journal of Computer Assisted Radiology and Surgery, 15 April 2019).

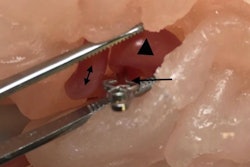

Using this setup, the researchers were able to simulate spinal fusion surgery on two lumbar phantoms. A surgeon wearing an AR headset was able to see digital markings that indicated where to drill the holes directly on the vertebrae. After drilling, the surgeon used AR technology to superimpose a 3D model of the vertebrae, along with a predesignated path for optimal screw insertion, onto the actual vertebrae.

On average, the surgeon's margin of error was 2.77 mm for identifying precise entry points and 3.38° for screw orientation when using AR guidance. Both figures were well within the threshold of acceptable measurements for effective screw insertion, according to the authors. It also took the surgeon approximately 125 seconds to register the 3D models with real anatomy -- about the same amount of time it takes with standard optical navigation (117 seconds).

The efficiency of this preliminary evaluation on lumbar spine phantoms demonstrated that AR image guidance could be viable for clinical applications, they concluded.

Improved accuracy, safety

Another more widely applied alternative for image guidance during spine surgery is to refer to patient-specific 3D-printed models. Despite relatively high manufacturing costs and long production times, early research has shown that 3D-printed spine models have the potential to improve the precision of spine surgery.

To prove the case, Dr. Riccardo Cecchinato and colleagues from Istituto Ortopedico Galeazzi in Milan performed surgical correction for 29 patients with a spinal deformity using either a standard freehand method with fluoroscopy or 3D-printed spine models for guidance.

The 3D-printed models were individually tailored for each patient based on preoperative CT scans. The surgical team used the 3D-printed models preoperatively to help formulate the optimal route for each screw insertion as well as intraoperatively to guide the surgery (European Spine Journal, 20 April 2019).

Upon comparing the two techniques, the researchers discovered that 3D-printed model guidance increased the rate of accurate screw insertions by a statistically significant degree while exposing the patients to considerably less radiation.

| Fluoroscopy vs. 3D printing to guide screw insertion during spine surgery | ||

| Fluoroscopy | 3D-printed model | |

| Proportion of accurately implanted screws* | 82.9% | 96.1% |

| Mean effective radiation dose | 0.82 mSv | 0.23 mSv |

Together, both studies affirmed that there were distinct benefits of integrating advanced visualization technologies into image-guided spine surgery. Fine-tuning the accuracy of pedicle screw insertion while also lowering radiation exposure through the two techniques may ultimately help improve patient outcomes.

![Overview of the study design. (A) The fully automated deep learning framework was developed to estimate body composition (BC) (defined as subcutaneous adipose tissue [SAT] in liters; visceral adipose tissue [VAT] in liters; skeletal muscle [SM] in liters; SM fat fraction [SMFF] as a percentage; and intramuscular adipose tissue [IMAT] in deciliters) from MRI. The fully automated framework comprised one model (model 1) to quantify different BC measures (SAT, VAT, SM, SMFF, and IMAT) as three-dimensional (3D) measures from whole-body MRI scans. The second model (model 2) was trained to identify standardized anatomic landmarks along the craniocaudal body axis (z coordinate field), which allowed for subdividing the whole-body measures into different subregions typically examined on clinical routine MRI scans (chest, abdomen, and pelvis). (B) BC was quantified from whole-body MRI in over 66,000 individuals from two large population-based cohort studies, the UK Biobank (UKB) (36,317 individuals) and the German National Cohort (NAKO) (30,291 individuals). Bar graphs show age distribution by sex and cohort. BMI = body mass index. (C) After the performance assessment of the fully automated framework, the change in BC measures, distributions, and profiles across age decades were investigated. Age-, sex-, and height-adjusted body composition reference curves were calculated and made publicly available in a web-based z-score calculator (https://circ-ml.github.io).](https://img.auntminnieeurope.com/mindful/smg/workspaces/default/uploads/2026/05/body-comp.XgAjTfPj1W.jpg?auto=format%2Ccompress&fit=crop&h=112&q=70&w=112)