Top Story

Latest News

Cases of the Week

Check out our Cases of the Week!

More from AuntMinnieEurope

Radiology pays tribute to Dutch pioneer Jacob Valk

April 18, 2024

Bayer and Hologic collaborate on CEM

April 15, 2024

Radiographers play pivotal research role in Gothenburg

April 15, 2024

Study links hearing loss to increased dementia risk

April 12, 2024

Attention turns to appropriateness criteria

April 12, 2024

General neuroradiology 5-day fellowship in Barcelona 2024

April 15, 2024April 19, 2024

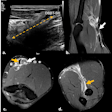

Pumping iron: Common MSK injuries from weightlifting

April 11, 2024

Next Generation Radiology Workflow Tools

April 10, 2024